AI Adoption That Works: Practical Frameworks for AI-Accelerated Delivery

Executive Summary: Faster Code Migration, New Zero-One Products, and Better, Quicker Prototyping are clear, early stage AI successes

- In Summer of 2025, we interviewed 15 executives about leveraging AI to build better products faster. Our interviewees had combined experiences ranging from VC-backed startups, PE-backed growth stage, and public company boards. Most dealt with software, and level of AI readiness ranged from “dipping toe in the water” to “we use it for every task, every day”

- Across the board, interviewees reported immense pressure for “more AI” from the board and CEO, with more reserved excitement from the C-Suite. Most wanted better primary measures of success.

- Our findings showed clear differences between higher and lower performing teams – both in terms of adoption and reported productivity gains. Key factors were building a culture of experimentation with AI, ease of incorporating the latest tools, sophistication in managing expectations of what AI can and cannot do well.

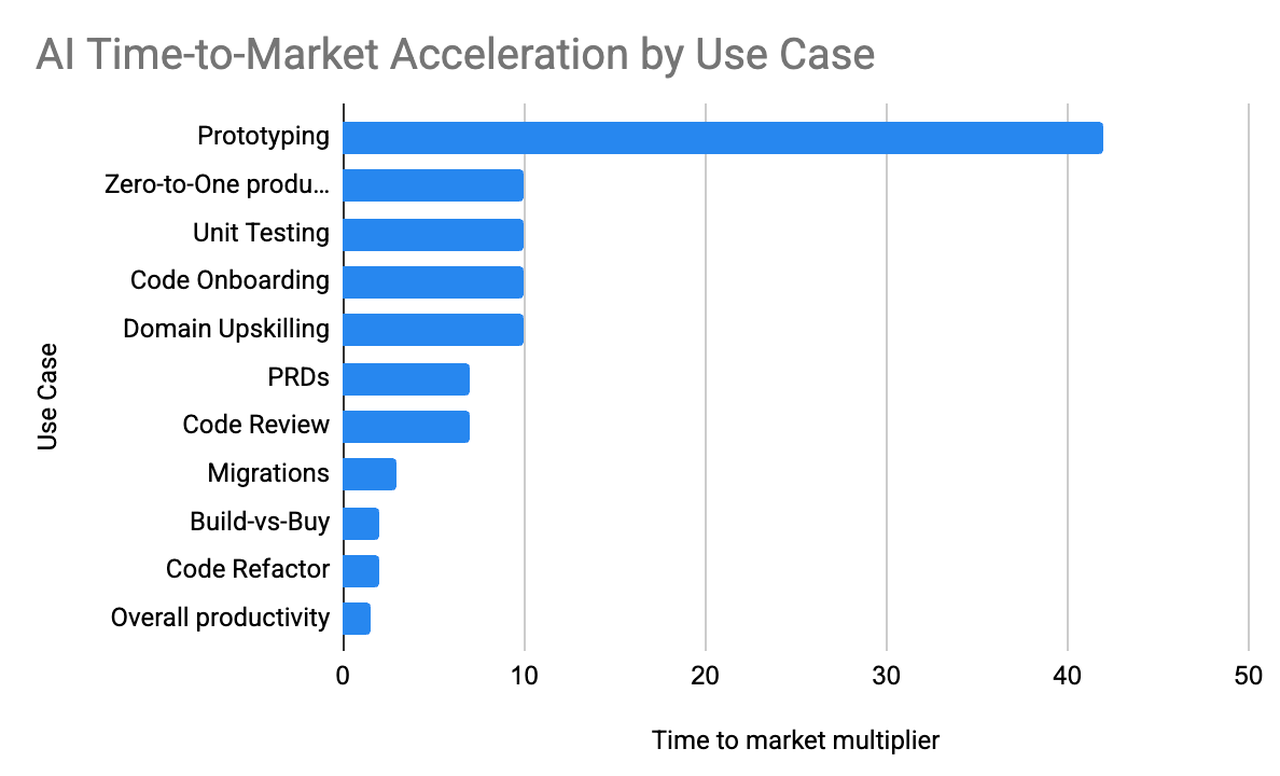

- Found compelling evidence on 10x time-to-market gains in specific areas, notably prototypes, zero to one features, and migrations. Doing work in days that would have taken weeks. Also found meaningful instances of spend avoidance in build vs buy.

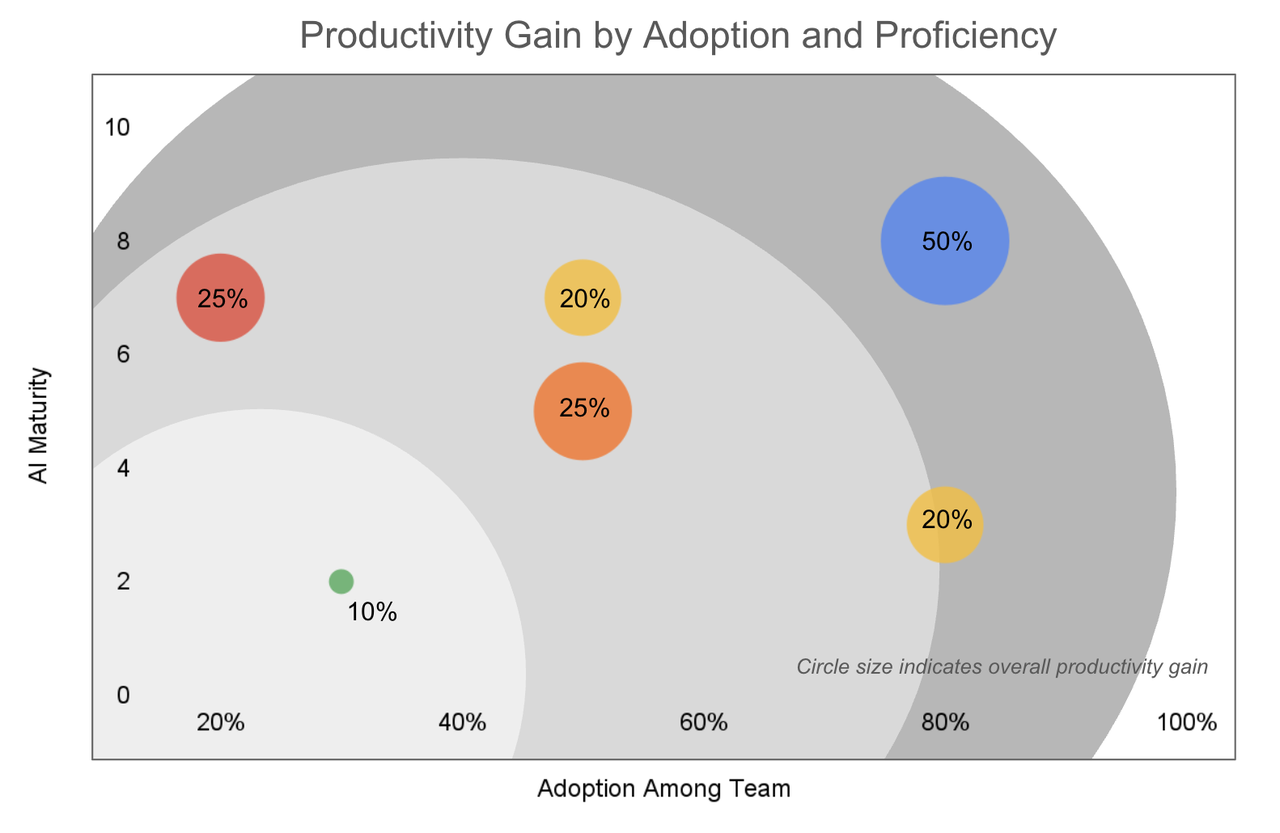

- All organizations reported widespread adoption at individual level, especially around coming up to speed on a new market or leveraging it as a thought partner. Few companies had team-level deployments but those who did reported 20%-50% overall time to market improvement. Most others estimated gains closer to 10%.

- Found several powerful best practices among the high performing teams –1) treat AI deployment like a change management project, including measuring sentiment & adoption, doing training, and measuring results; 2) manage context explicitly, keep accountability with the human-in-the loop, and versioning prompts; and 3) proactively steer clear of ways AI can inadvertently dig you a hole you can’t get out of.

AI Is Eating The World Of Software

If you haven’t heard, Artificial Intelligence is everywhere. CEOs and business leaders at all levels know they need to adjust—whether leading a growth-stage startup, a mature enterprise, a public company, or one navigating strategic transition. What makes this moment unusual is how many disruptions are converging simultaneously under the singular label of “AI.” It is a productivity tool, a platform shift, a rapidly evolving customer expectation, a disruptive technology, a competitive threat, and a business model transformation all at once.

The closest parallel is the shift to Software-as-a-Service in the early 2000s. That transition moved software from on-premise to cloud, replaced perpetual licenses with subscriptions, shifted development methodologies from waterfall to agile, affected both B2B and B2C markets, unseated incumbents, and ushered in the modern venture capital era. But those changes materialized over years. AI’s transformation feels like it’s happening in months. One CEO described an engineer recreating a product in a weekend that had originally taken years to build. AI isn’t theoretical anymore, it’s an existential threat. It puts enormous pressure on both CEOs and their boards. One of the most common patterns we heard across dozens of interviews was simple: “Our CEO and board say more AI,” even when they don’t yet know where or how to apply it effectively.

Rather than resisting this pressure, we recommend using it to your advantage. As one leader told us, “transformational innovation must be led personally by the CEO” because it affects the entire company. While some teams might resist the change, Product and engineering teams are already excited about AI and want to put it to use. In fact, as one participant observed, engineers are “actually excited about AI even beyond its commercial possibilities”—they love the underlying technology itself. The challenge isn’t generating enthusiasm—it’s figuring out where to apply it effectively. Our take: focus on the first principles of change management. As one executive put it, “Look for where you can be more efficient, not just what you can do with AI.”

The focus of this report is on how to build better products faster. Every company will need a unique AI strategy tailored to their customers and market, but we’ve identified universal practices emerging in product and engineering organizations: creating cultures of experimentation, setting realistic productivity expectations, building on proven successes, and avoiding common pitfalls that early adopters have already experienced.

Important Cultural Behaviors to Instill

The most successful AI implementations have surprisingly little to do with the technology itself. The adage “Culture eats strategy for breakfast" continues to ring true, even in the AI age. After interviewing dozens of product and engineering leaders, a clear pattern emerged: the companies thriving with AI aren’t necessarily the ones with the biggest budgets or the fanciest tools. They’re the ones that figured out how to make their people want to experiment, and fold the successful experiments into common use.

Build AI-Native Culture of Rapid Adoption and Psychological Safety

The best AI adopters share a few key behaviors that feel almost mundane. Take a government services company we spoke with, where the entire team operates under what their VP of Product calls “startup agility”. They can adopt new tools “without lengthy approval processes”. When ChatGPT Enterprise launched, they simply bought licenses for everyone. No committees, no pilot programs, no six-month evaluation cycles.

Of course, not every organization has this luxury. Regulated industries still need approval processes, and a financial services company we interviewed acknowledged they “cannot try tools like Gamma due to data governance contracts.” But the best companies in these constrained environments have figured out how to make approval transparent and lightweight. They pre-approve categories of tools, create fast-track evaluation processes, and maintain clear guidelines so teams know what they can experiment with immediately versus what needs formal review. In an environment where “current” expires every few weeks, a six-month tool cycle isn’t slow-moving. It’s strategically reckless.

Regulated industries still need approval processes, and a financial services company we interviewed acknowledged they "cannot try tools like Gamma due to data governance contracts."

But speed of adoption is just the beginning. The companies that really get it create what a VP of Engineering at a healthcare startup discovered works: dedicated space for experimentation without productivity pressure. “We opened sprint time for exploration without productivity expectations,” he explained, “and created a Slack channel for sharing wins/failures”. The magic was in giving people permission to fail publicly.

This kind of psychological safety produces measurable results. At a wildfire detection company, a simple two-hour hackathon “replaced typical 2-day hackathon timeline” and led to “daily Cursor usage doubling from 25% to 50% of the team”. Their engineering leader noticed something crucial: “Engineers sharing experimentation stories” became the norm, not the exception. When people start voluntarily talking about their AI experiments — both successes and failures — you know you’ve started to foster an AI culture.

One particularly telling metric comes from a Principal Engineer’s team survey at an e-commerce platform, where “half would be ‘very upset’ if tools were removed”. AI tools have become integral to how people think about their work.

Lead Throuth Curiosity and Experimentation

The most counterintuitive finding from our research is that successful AI adoption requires leaders to model vulnerability, not confidence. The companies that struggled were often led by executives who positioned themselves as AI experts from day one. The successful ones? Their leaders admitted they were learning alongside everyone else. As one participant noted, the key is setting realistic expectations up front: we “had no expectation of immediate productivity gains, more about building habits”. This is about building the muscle, not lowering the bar. Culture change is measured in months and years, not sprint cycles. The best leaders we encountered created what a CTO at a private equity firm called “natural slowdown” rather than mandated change. They didn’t force adoption; they created conditions where adoption felt inevitable. They celebrated small wins, normalized intelligent failures, and most importantly, they demonstrated that experimenting with AI was part of the job. In the end, building an AI-ready culture isn’t about technology at all. It’s about creating an environment where people feel safe to learn and grow while they figure out how to be permanently better. The companies that get this right don’t just adopt AI, they evolve into organizations that can adapt to whatever comes next.

The Productivity Paradox

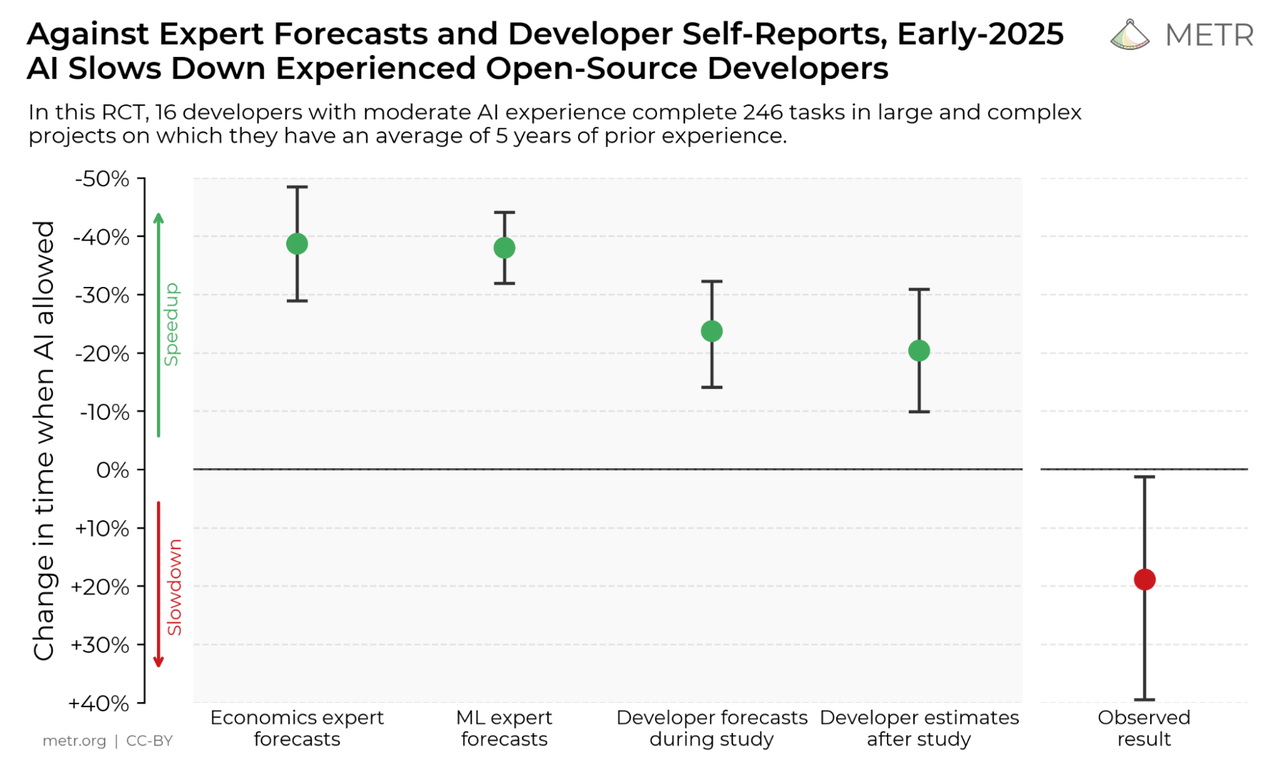

It’s rational to be skeptical: a METR study found that AI tools actually slowed down experienced developers by 19% (despite those developers believing they’d gotten 20% faster), Bain & Company’s 2025 Technology Report revealed that teams report productivity boosts of only 10-15%, McKinsey found that nearly 80% of companies using generative AI report no material bottom-line impact, and DORA’s 2025 research confirms that while AI adoption has become nearly universal in software development, it consistently increases delivery instability. The throughline is that AI’s impact is wildly context-dependent, shaped by task type, team maturity, workflow integration, and what you choose to measure.

That said, as we mentioned earlier, there are multiple reasons to adopt AI beyond productivity: competitive pressure, rapidly evolving customer expectations, and fundamental platform shifts. And because our focus here is how AI helps teams build better products faster, we have to look beyond individual productivity, where everyone we spoke with uses AI daily and swears by it, to team productivity. Even at the best companies, executives offered only gut-feel estimates of 10-20% overall gains. Yet these same leaders described compelling, measurable wins concentrated in two specific areas: time to market and the ability to build or do in-house what teams would otherwise have to buy or hire for.

It’s rational to be skeptical: a METR study found that AI tools actually slowed down experienced developers by 19% (despite those developers believing they’d gotten 20% faster), Bain & Company’s 2025 Technology Report revealed that teams report productivity boosts of only 10-15%, McKinsey found that nearly 80% of companies using generative AI report no material bottom-line impact, and DORA’s 2025 research confirms that while AI adoption has become nearly universal in software development, it consistently increases delivery instability. The throughline is that AI’s impact is wildly context-dependent, shaped by task type, team maturity, workflow integration, and what you choose to measure.

That said, as we mentioned earlier, there are multiple reasons to adopt AI beyond productivity: competitive pressure, rapidly evolving customer expectations, and fundamental platform shifts. And because our focus here is how AI helps teams build better products faster, we have to look beyond individual productivity, where everyone we spoke with uses AI daily and swears by it, to team productivity. Even at the best companies, executives offered only gut-feel estimates of 10-20% overall gains. Yet these same leaders described compelling, measurable wins concentrated in two specific areas: time to market and the ability to build or do in-house what teams would otherwise have to buy or hire for.

Time to market

The clearest wins came from dramatically compressed timelines on projects that previously faced significant delays. As one leader put it: “Using Claude code we completed a migration in days that a human alone had tried for multiple weeks without success.” The productivity gain wasn’t just speed—it was making previously impossible projects suddenly feasible. We saw this pattern repeatedly in migrations, prototyping, and learning new domains where AI accelerated teams past bottlenecks that had stalled progress for weeks or months.

Using Claude code we completed a migration in days that a human alone had tried for multiple weeks without success.

Build versus buy, hire versus do

Perhaps more transformative were the cases where AI fundamentally changed the build-versus-buy calculus. A telehealth company added OCR for insurance cards directly into their app—a feature they previously would have outsourced or skipped entirely. In cases where “good enough” makes a huge difference, AI changed the economics of building in-house. We saw similar patterns with OCR for processing hunting and fishing licenses at another company. For zero-to-one development, AI proved instrumental in a financial services company building a loan validation product. Their CEO avoided $200,000-$300,000 in engineering spend that he otherwise would have needed to hire a team. The productivity gain wasn’t measured in percentage points—it was measured in market entry that wouldn’t have happened at all. These weren’t marginal improvements; they were capabilities that enabled teams to build something they would have otherwise bought, do something in-house they would have otherwise hired for, or enter a market where they lacked sufficient expertise.

One CEO avoided $200,000-$300,000 in engineering spend that he otherwise would have needed to hire a team.

Of course, this comes with risks. Things that require expertise always seem easier from the outside than when you become intimately familiar with them, and AI dramatically accelerates this illusion. It’s much easier to build something that looks polished but is poorly constructed underneath. We expect to see a proliferation of shoddy products and unmet expectations in the near future as businesses and consumers recalibrate around trust and confidence in the brands and products on which they rely. How do you, as an innovation leader, avoid this fate?

Where AI actually delivers

After months of conversations with product and engineering leaders, we kept hearing the same refrain: AI delivers “step function changes in specific areas”. Not gradual improvements—dramatic leaps in capability that fundamentally change how work gets done. The key insight? These aren’t broad productivity gains across everything. They’re concentrated bursts of efficiency in very specific, well-defined tasks.

Prototyping: The Clear Winner

The most conclusive evidence of AI impact shows up in prototyping workflows. A former Chief Product Officer we interviewed found a “100x efficiency gain for initial MVP development”, building multiple product iterations in a single week that would have taken months traditionally. Similarly, a CTO at a private equity firm reported a “2-3x velocity increase for new projects” and described “transforming PRD creation from 1 day to 1 hour”.

The most conclusive evidence of AI impact shows up in prototyping workflows. A former Chief Product Officer we interviewed found a “100x efficiency gain for initial MVP development”, building multiple product iterations in a single week that would have taken months traditionally. Similarly, a CTO at a private equity firm reported a “2-3x velocity increase for new projects” and described “transforming PRD creation from 1 day to 1 hour”.

What makes prototyping so perfect for AI? It’s the sweet spot between creativity and structure. You need something that works and looks real, but you don’t need it to be production ready or bulletproof. A VP Engineering at a wildfire detection company put it perfectly: their team built demos to “inspire PM/design teams” through “vibe coding”. The code quality was poor, but the “demo achieved the mission” of demonstrating the concept.

One CPO found a "100x efficiency gain for initial MVP development"

The pattern holds across industries. Tools like Lovable.dev and V0 have evolved to generate not just functional prototypes, but ones with “better designs with more personality” and “stronger brand incorporation”. The result is that product teams can test concepts and get customer feedback in days rather than quarters.

Other Wins For Migrations, Code Reviews, Education, and Writing

Software and platform migrations were highlighted by interviewees as ideal candidates for AI tooling. A Principal Engineer described a Datadog to Grafana dashboard migration where a human took “weeks of work” per dashboard, Windsurf got them “50% of the way there” but Claude achieved “80-90% completion in one week”. The key advantage? Migrations involve repeated patterns of work with known end states, making them easier for AI tools to automate. As the same engineer noted, “visual validation was possible” for dashboards, unlike the complex code review requirements needed for custom development.

Code reviews and testing have become table stakes at leading organizations. Engineers report that AI “catches missed issues” in code review and excels at “code cleanup and unit test generation”. A Director of Product Engineering noted that AI agents are “excellent at unit tests”.

AI can catch missed issues via code reviews and accelerate learning new fields and industries.

Learning and education applications are transforming how teams onboard and understand complex systems. AI helps engineers parse unfamiliar codebases and accelerates “learning new fields/industries”. One participant described using AI as a “thought partner” that requires “background context and feedback” but dramatically reduces the time to understand new domains.

Requirements writing shows some of the most measurable time savings. A Director of Product reported that a requirements document that normally takes “two to three hours to write” can now be done in fifteen minutes using AI as a thought partner. A VP of Product at a government services company explained their workflow: “AI creates initial draft, team refines from there”. This collaborative approach to writing has become standard practice among the most successful adopters we interviewed, turning what was once a solo endeavor into an AI-enabled collaborative editing process.

Pro Tips to Increase AI Productivity

The most innovative organizations we studied shared common practices for successful AI adoption:

- Fast-track new tools to allow teams to experiment. Expect tools and models to change quickly. Whitelist tool categories and publish clear criteria. Make decisions fast: “For us, authorizing a new tool is not a big deal. We huddle, make a decision, and run.” Avoid getting entrenched in any single toolset.

- Set organizational adoption goals to get people using new tools. The right culture won’t guarantee success, but the wrong one will guarantee failure. Establish baselines through sentiment analysis of internal channels. Recognize legitimate hesitancies: concerns about AI performance, cross-functional toe-stepping as “each function can now do shitty versions of other teams’ work,” and the fear of looking dumb. Create spaces where teams could share wins and voice concerns openly.

- Focus attention first on experimentation and learning. Create dedicated channels for sharing both wins and failures. Model vulnerability by sharing your own experiments first. Frame early investment as “education budget, not productivity budget.” Set realistic expectations: “what I want is people who are open to experiment and try things out and learn as it goes… lessons you learn right now are good for six or maybe twelve months.”

- Keep humans accountable at each step. Recognize that AI cannot take responsibility for its actions—by definition, being accountable means being “required or expected to justify actions or decisions,” which doesn’t yet apply to AI. Establish clear patterns like “AI can reject but not approve.”

- Treat prompts like product code and engineer them. Use incremental prompting with step-by-step validation rather than one-shot requests. Source-control your prompts. Practice “n-shot prompting” where you give AI examples of your desired output format, then ask for a new one that follows your structure and level of detail.

- Leverage voice input for efficiency. LLMs unlock the power of voice by letting you speak your intent rather than dictate text—you can explain conversationally, revise mid-thought, and describe outcomes while the AI generates the actual output. As one leader put it: “Voice is the new interface for programming.”

Anti-patterns

Every successful AI implementation is successful in its own way, but failures tend to follow predictable patterns. After dozens of interviews, we’ve identified the most common ways organizations undermine their own AI efforts, often while believing they’re being thoughtful and strategic.

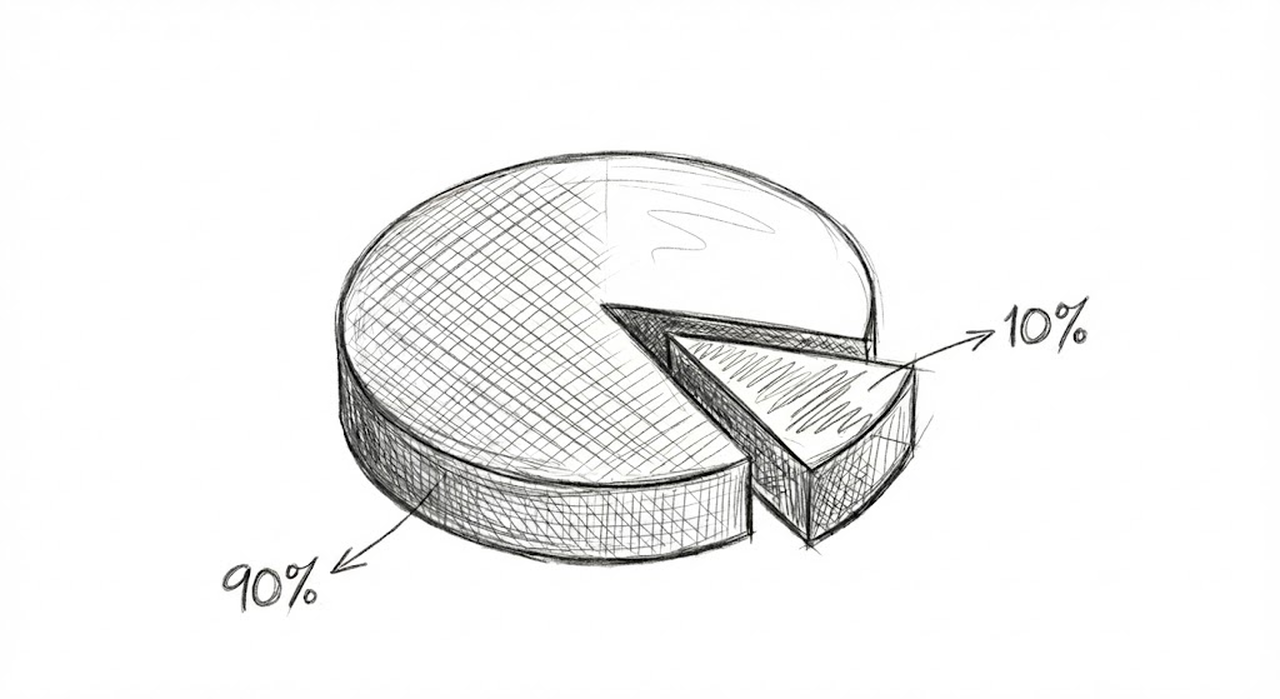

The 90% to 100% Trap

The most insidious anti-pattern is what we call the “90% to 100% trap.” As one executive explained: “AI gets you 90% there so fast you assume it’s complete”, but that “remaining 10% is extremely time-consuming”. Teams must communicate this reality upward to prevent unrealistic expectations from derailing projects.

This pattern plays out everywhere. A former CPO discovered that while AI delivered “100x efficiency gain for initial MVP development,” the “10% of time spent fixing AI-generated code” increases “dramatically after initial prototype”. The danger isn’t in the fixing, it’s in not planning for it. Organizations that treat AI output as nearly finished work consistently underestimate the human effort required for production readiness.

The psychological element makes this worse. Teams experience such rapid initial progress that they assume the final stretch will be equally fast. When it isn’t, frustration sets in, and AI gets blamed for “not working” rather than being inappropriately scoped.

Expertise Blindness

Perhaps the most counterintuitive failure mode is what we’ve termed “expertise blindness”: the tendency for people to trust AI in unfamiliar domains while being deeply skeptical in their own areas of expertise. As one participant put it: “It seems so adept at [everyone else’s expertise], but not my own.”

This creates a peculiar dynamic where the people most qualified to evaluate AI’s output in their domain are also most resistant to using it. A healthcare VP Engineering was surprised by the “amount of replacement fear among ICs”—the individual contributors who should theoretically be most excited about productivity tools were the most worried about being displaced.

The pattern extends beyond fear into perfectionism. Expert users develop what one participant called a “don’t lie to me” mentality, focusing obsessively on AI’s inaccuracies rather than its capabilities. They get stuck hunting for edge cases rather than leveraging the 90% of cases where AI works well.

Treating AI as Fixed Technology

Many organizations approach AI as if it were traditional enterprise software: something you evaluate once, purchase, and deploy for years. This mindset leads to elaborate evaluation processes that make sense for database systems but are counterproductive for rapidly evolving AI capabilities.

A former CPO learned this lesson the hard way: “Every attempt we made to fine tune made it worse”. Fine-tuning reduces what he called the “magic” of generic answering capability, and “corporate data usually insufficient for quality training.” Yet organizations continue pursuing custom models when off-the-shelf solutions would work better.

The smarter approach? Build for model agility from day one. The same executive now recommends creating “benchmark test suite for each model” and running “tests whenever new models are released” to enable “easy switching to cheaper/better models”.

How We Evaluated Maturity

Throughout our research, we asked participants to self-assess their organization’s AI maturity on a 1-10 scale. The responses revealed fascinating patterns in how leaders think about progress. Our most mature participant, a VP of Product at a government services company rated their team “7-8/10 (high adoption across organization)”, while at a financial services organization that had low AI adoption also claimed “7/10 maturity”.

What became clear is that raw numbers don’t tell the whole story. The most sophisticated assessment came from the wildfire detection company leader, who preferred “percentile-based scoring over absolute measures” and wanted to “maintain ’early mainstream’ position as technology evolves”. He understood something crucial: AI maturity isn’t about reaching a fixed destination, it’s about maintaining relative position as the entire field advances.

Our research suggests a more nuanced framework where scores 1-2 represent “negative productivity during tool adoption/training,” score 5 means you’ve “tried tools with some gains but not integrated into SDLC,” score 7 indicates “2x productivity improvement with good integration,” and score 10 represents “10x improvement with culture of continuous experimentation”. The key insight? True maturity isn’t measured by the sophistication of your AI tools—it’s measured by your organization’s ability to continuously adapt as those tools evolve.

Closing Thoughts

Looking ahead, AI is going to keep getting cheaper, more capable, and more embedded in everyday product and engineering work, which means the differentiator will not be who picked the “right” model or tool at a point in time, but who built an organization that can keep learning as the ground shifts. The companies we saw succeeding treated AI as a fast-moving platform shift and designed for constant adaptation: lightweight approvals, space to experiment without fear, leaders willing to learn out loud, and habits that turn local wins and failures into shared capability. In practice, the safest long-term bet is to invest less in any single tooling decision and more in a durable learning culture that rewards curiosity, makes experimentation part of the job, and steadily tightens the loop from insight to shipped value, because that cultural agility is what will compound while the tools themselves inevitably change.

About The Authors

Brad Taylor

Brad is a hands-on, mission-driven engineering executive who has led teams across a variety of industries, including healthcare, accessibility, gaming, pets and payments. He is currently a fractional CTO and advisor to tech startups in healthtech and climate change. Previously, he served as CTO of Nursa and Galileo, and led multiple engineering verticals at Marqeta through their IPO. Early in his career, Brad led the development of the world’s first open-source electronic medical record, OpenVista CIS, while at Medsphere.

Brad is a hands-on, mission-driven engineering executive who has led teams across a variety of industries, including healthcare, accessibility, gaming, pets and payments. He is currently a fractional CTO and advisor to tech startups in healthtech and climate change. Previously, he served as CTO of Nursa and Galileo, and led multiple engineering verticals at Marqeta through their IPO. Early in his career, Brad led the development of the world’s first open-source electronic medical record, OpenVista CIS, while at Medsphere.

Nolan Myers

Nolan Myers is the owner and Principal Consultant of TCGen, where he helps organizations harness new technologies to accelerate innovation and deliver better products to market. A Harvard-trained computer scientist with early roots in the arts, Nolan has led product and engineering teams in building solutions used by millions — from an assessment platform adopted by half of California’s public schools to interactive digital textbooks and a collaborative publishing system used by McGraw-Hill, Pearson, Starbucks, and McDonald’s. He holds three patents for innovations in digital content creation and has built global, high-performing teams, most notably as Founder and CEO of DoubleGDP. Today, Nolan applies this blend of technical depth, product leadership, and innovation experience to help companies unlock business results with the strategic use of AI.

Nolan Myers is the owner and Principal Consultant of TCGen, where he helps organizations harness new technologies to accelerate innovation and deliver better products to market. A Harvard-trained computer scientist with early roots in the arts, Nolan has led product and engineering teams in building solutions used by millions — from an assessment platform adopted by half of California’s public schools to interactive digital textbooks and a collaborative publishing system used by McGraw-Hill, Pearson, Starbucks, and McDonald’s. He holds three patents for innovations in digital content creation and has built global, high-performing teams, most notably as Founder and CEO of DoubleGDP. Today, Nolan applies this blend of technical depth, product leadership, and innovation experience to help companies unlock business results with the strategic use of AI.